agen judi bola , sportbook, casino, togel, number game, singapore, tangkas, basket, slot, poker, dominoqq,

agen bola. Semua permainan bisa dimainkan hanya dengan 1 ID. minimal deposit 50.000 ,- bonus cashback hingga 10% , diskon togel hingga 66% bisa bermain di android dan IOS kapanpun dan dimana pun. poker , bandarq , aduq, domino qq ,

dominobet. Semua permainan bisa dimainkan hanya dengan 1 ID. minimal deposit 10.000 ,- bonus turnover 0.5% dan bonus referral 20%. Bonus - bonus yang dihadirkan bisa terbilang cukup tinggi dan memuaskan, anda hanya perlu memasang pada situs yang memberikan bursa pasaran terbaik yaitu

http://45.77.173.118/ Bola168. Situs penyedia segala jenis permainan poker online kini semakin banyak ditemukan di Internet, salah satunya TahunQQ merupakan situs Agen Judi Domino66 Dan

BandarQ Terpercaya yang mampu memberikan banyak provit bagi bettornya. Permainan Yang Di Sediakan Dewi365 Juga sangat banyak Dan menarik dan Peluang untuk memenangkan Taruhan Judi online ini juga sangat mudah . Mainkan Segera Taruhan Sportbook anda bersama

Agen Judi Bola Bersama Dewi365 Kemenangan Anda Berapa pun akan Terbayarkan. Tersedia 9 macam permainan seru yang bisa kamu mainkan hanya di dalam 1 ID saja. Permainan seru yang tersedia seperti Poker, Domino QQ Dan juga

BandarQ Online. Semuanya tersedia lengkap hanya di ABGQQ. Situs ABGQQ sangat mudah dimenangkan, kamu juga akan mendapatkan mega bonus dan setiap pemain berhak mendapatkan cashback mingguan. ABGQQ juga telah diakui sebagai

Bandar Domino Online yang menjamin sistem FAIR PLAY disetiap permainan yang bisa dimainkan dengan deposit minimal hanya Rp.25.000. DEWI365 adalah

Bandar Judi Bola Terpercaya & resmi dan terpercaya di indonesia. Situs judi bola ini menyediakan fasilitas bagi anda untuk dapat bermain memainkan permainan judi bola. Didalam situs ini memiliki berbagai permainan taruhan bola terlengkap seperti Sbobet, yang membuat DEWI365 menjadi situs judi bola terbaik dan terpercaya di Indonesia. Tentunya sebagai situs yang bertugas sebagai

Bandar Poker Online pastinya akan berusaha untuk menjaga semua informasi dan keamanan yang terdapat di POKERQQ13. Kotakqq adalah situs

Judi Poker Online Terpercayayang menyediakan 9 jenis permainan sakong online, dominoqq, domino99, bandarq, bandar ceme, aduq, poker online, bandar poker, balak66, perang baccarat, dan capsa susun. Dengan minimal deposit withdraw 15.000 Anda sudah bisa memainkan semua permaina pkv games di situs kami. Jackpot besar,Win rate tinggi, Fair play, PKV Games

Comments

Hi Lucie,

Yes, that's possible, and not even that complicated. But how would the user draw the line, though? With a finger on a touch screen? Or with a Wacom-like tablet? Or with the mouse?

Cheers!

Sebastiaan

Check out SigmundAI.eu for our OpenSesame AI assistant!

Hi Sebastiaan,

The user will draw the line with the mouse or with a finger on a touch screen.

Thank you for your answer.

Lucie

Hi Lucie,

In that case, something like the logic below should do the trick. You may need to tweak the details though. But if you carefully read through the code, then you will probably be able to understand how it works and modify it for your purpose.

Cheers!

Sebastiaan

Check out SigmundAI.eu for our OpenSesame AI assistant!

Hi Sebastiaan,

Thank you so much.

I look and contact you again if necessary.

When it is done, the implemented test can be shared by example experiments?

Best,

Lucie

> When it is done, the implemented test can be shared by example experiments?

That's very generous! 👍️

There's no systematic system for sharing experiments. However, you could upload it to the OSF in a public project. That's what I usually do.

Check out SigmundAI.eu for our OpenSesame AI assistant!

Hi Sebastiaan,

I edited the experiment and I want to put it online.

I understand inline_script must be inline_javascript but how?

Thank you for your help,

Best regard,

Lucie

Hi Lucie,

> I edited the experiment and I want to put it online. I understand inline_script must be inline_javascript but how?

That depends with what you mean by putting it online.

inline_javascriptitem. JavaScript and Python are different programming languages. This translation process will at least be tricky, and perhaps not even possible because the JavaScript API does currently not support everything that the Python API supports.I hope this clear things up!

Cheers,

Sebastiaan

Check out SigmundAI.eu for our OpenSesame AI assistant!

Hi Sebastiaan,

It is your second suggestion, I want people to be able to run the experiment in a browser. I am sorry but I do not find the "inline_javascript" item on OpenSesame.

Could you help me?

I will also share the experiment(that is already) with the community.

Best regards,

Lucie

> It is your second suggestion, I want people to be able to run the experiment in a browser. I am sorry but I do not find the "inline_javascript" item on OpenSesame.

This item is new in OSWeb 1.3, which is included in OpenSesame 3.2.7. So you probably need to update! However, as I said, translating your scripts to JavaScript may be tricky!

Check out SigmundAI.eu for our OpenSesame AI assistant!

I actually didn’t have the latest version...

I will try because I need it.

I will let you know if I make it.

Another question: Is it possible to have the same visual rendering on the browser as on the computer?

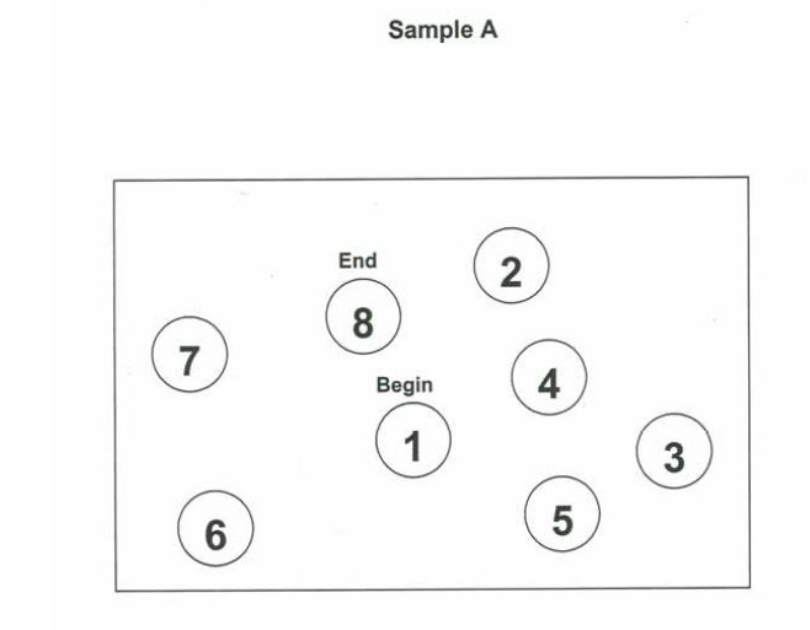

browser:

computer:

Thanks for all,

Lucie

> Another question: Is it possible to have the same visual rendering on the browser as on the computer?

If you want to have the exact same rendering, then I would create images with the text and show these. Otherwise there will be slight differences between the desktop and the browser (and possibly even between different browsers).

Check out SigmundAI.eu for our OpenSesame AI assistant!

OK, thank you for this solution!

Best regards,

Lucie

Hello Lucie,

You said that you were agree to share your Trail Making Test experiment.

I'm so interesting to have it. How it's possible to get your work.

Thanks a lot

Frédéric