Using visual AND auditive stimuli in the same loop/sequence for different trials ?

Hei everyone.

First of all, I'm quite new here and into opensesame. I know how to do a basic experiment but not so much more...

Here is my problem:

- at the moment, my global experiment is divided into 3 different opensesame tasks, meaning 3 .osexp files: one for my phonological modality, one for a semantic modality and another for the visual modality.

- the 3 tasks have almost the same design, excepting the phonological and semantic ones both include auditive stimuli presentation (using

sampler) whereas the visual one has a simple visual presentation (usingsketchpad). - what we do want to try now is to present the 3 modalities all together in a random way

- and I'm struggling to do an experiment where in a same bloc participant could see visual form on the screen in the visual condition or hearing words in both phonological and semantic conditions.

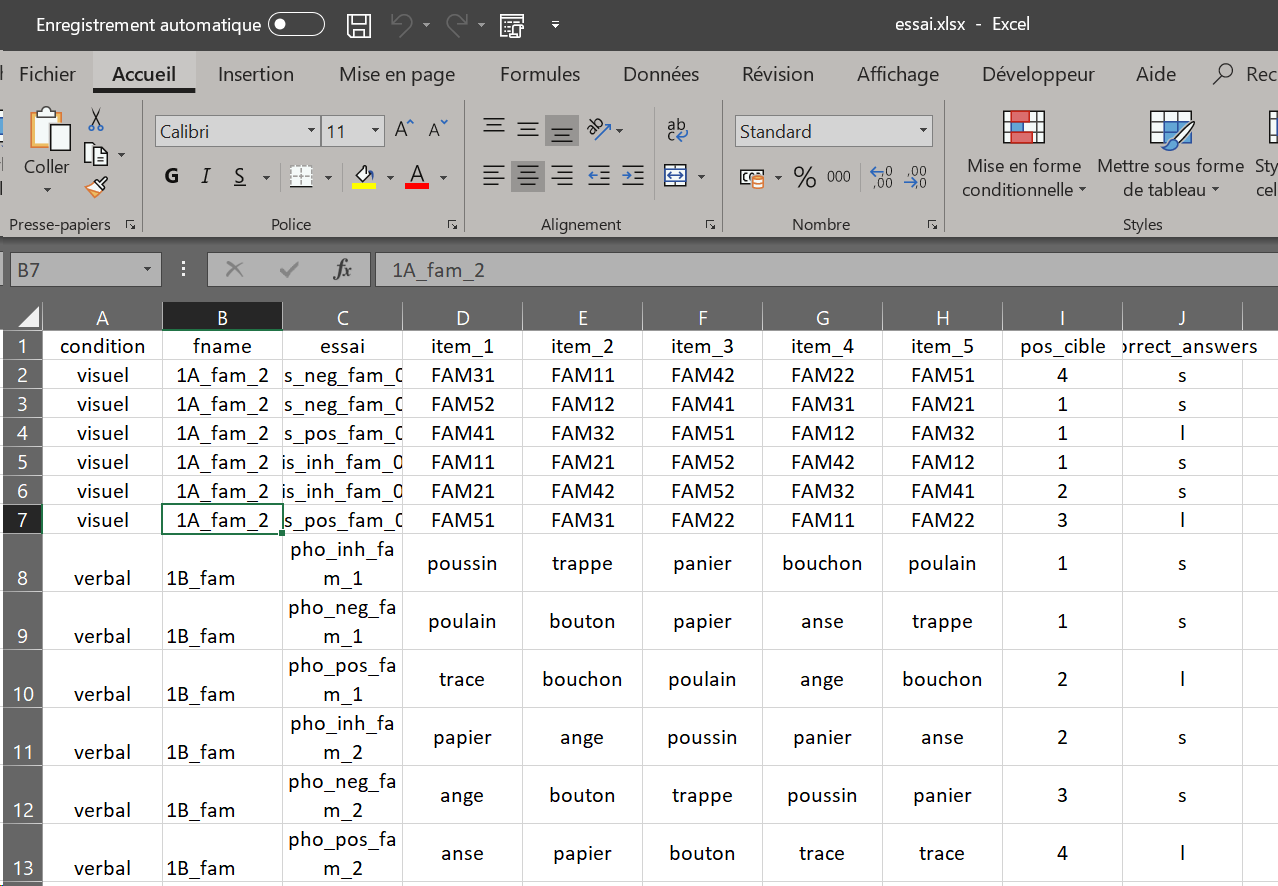

- I have diffrenciated the two types by adding a 'condition' column into my xlsx file and tried ti use the '

run if' option such as :run (or show?) if [condition] = 'visual'for the sketchpad with my visual stimuli and[condition] = 'verbal'for the auditory ones. - Using the

if statementdoesn't prevent the items to be prepared and I got different errors messages (depending on my different attempts) but most of the time, it seems that as the run_if/show_if still allow the item prepration, the program searches for visual stimuli in auditive extension (.wav here) or for my auditive simuli in the visual extension (.png)

For example: "cheval.png" does not exist (which indeed doesn't exist as the correct file is cheval.wav)

Details:

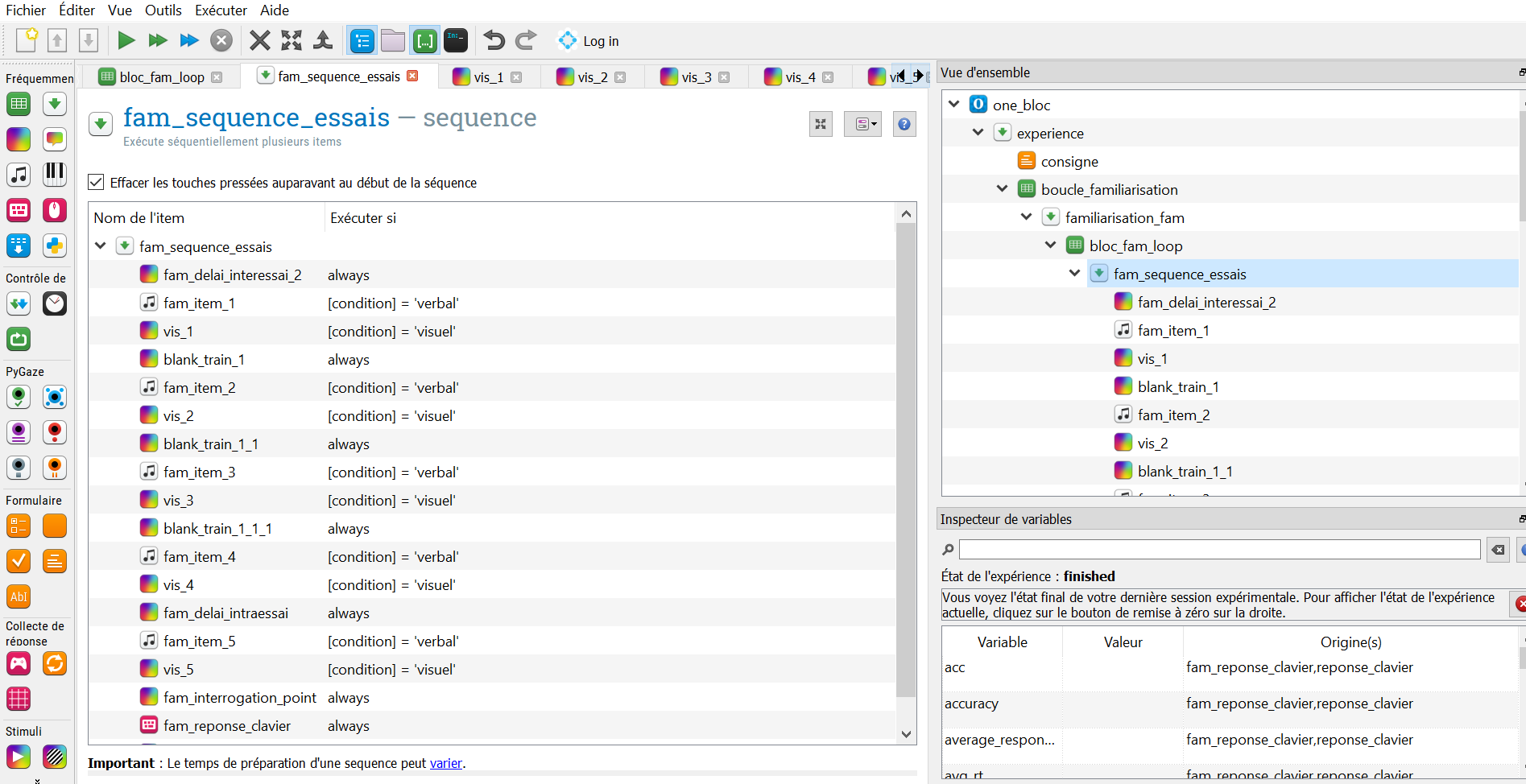

item-stack: experience[run].boucle_familiarisation[run].familiarisation_fam[run].bloc_fam_loop[run].fam_sequence_essais[prepare].vis_1[prepare]

Here is an example of what I would like to get one day... is that even possible ? Should I create another loop or sequence? If yes, I tried in different ways but I don't really know how to do in a proper way ...

Well... I hope someone could give me some advice, thank you all.

PS: sorry for my english !

Comments

Hi,

Would it be sufficient, if you just put all the files into the same folder (i.e. the file pool)? Like that, Opensesame won't complain that it can't find items, provided that they exist. However, I am surprised that OS tries to prepare items even though the run_if statement should not allow them to run. Are you sure that the run if statement works as intended? For example, you can try to run the sketchpads without images (e.g. just some shapes) and see whether they show up on the screen, even though the condition is not a visual one. Does that make sense?

Eduard

Hei !

My files (visual .png and verbal .wav) already are in the pool files and they exist. The problem was: it was trying to load my visuel stimuli into verbal one because it wasn't taking care of the different condition end run_if statements at the items' preparations.

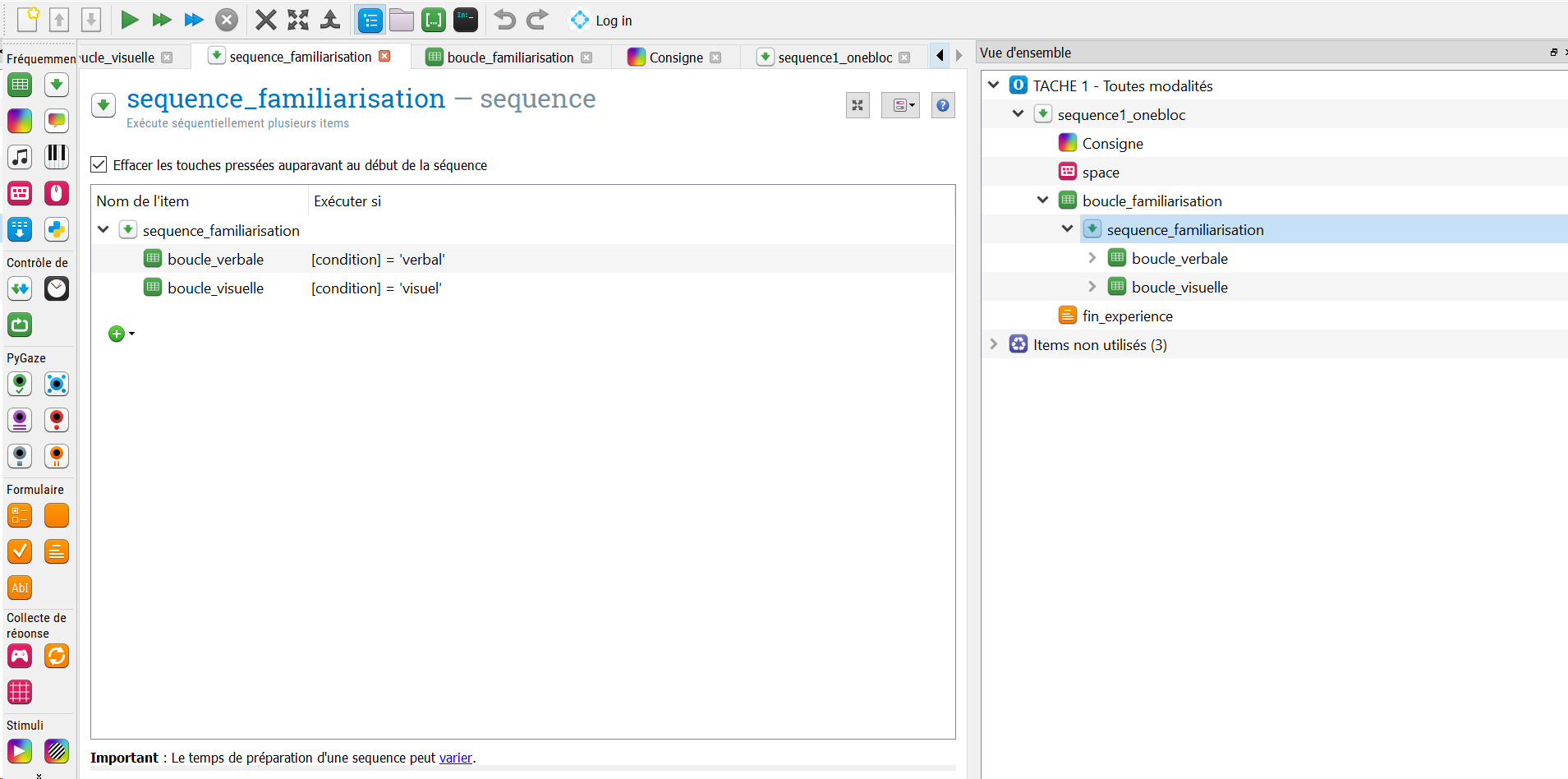

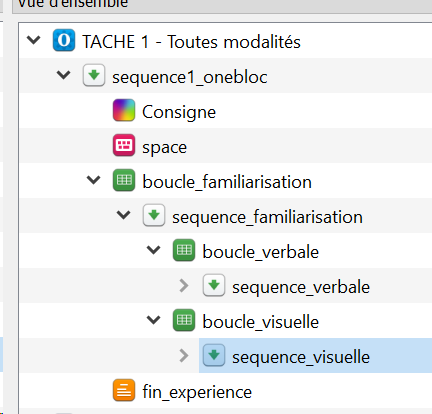

I tried to to but 2 different loops : a visuel one and a verbal one (for my phonological & semantic conditions). So far, both are working, and it doesn't prepare my verbal stimuli in a visual format (.png) so that's cool!!

BUT as the loops are constitued of a sequence, OpenSesame reads it sequencially (well, makes sens).

I don't know how to do make it read randomly the visual or the verbal condition... = one loop or another randomly

As you can see, I've built a training loop ("boucle_familiarisation") in which I loaded a file with trainings' trials and the conditions (verbal vs visual) and the correct file to get depending the condition...

So far, as I've written, it's working but sequencially : if I put my verbal loop first, it will read all my verbal essay and same with my visual one. In both loops, I loaded a file referring to the specific trials

I don't know if it is the best solution.

So... well, how can I get my training loop to be random ?

Thanks for your help.

Hello Eduard ! Thanks for your answer.

Do you mean in the same initial folder? Because, I always put all my files into the Opensesame's file pool.

I tried another way: I made a training loop ("boucle_familiarisation") with a sequence and a file specifying my trials with my conditions and file name.

I made two loops: one for my visual stimuli/condition and another for my verbal stimuli/conditions (phonological & semantic). Each one has its specific files.

so far, it's working but sequencially (makes sens indeed) and I don't know how to make it randomly: meaning: it would read my training loop file randomly so I won't have all the verbal trials then all the visual ones.

Wooo ! While writing my comment, I got an idea and it acutally works:

I made two loops: one for my visual stimuli/condition and another for my verbal stimuli/conditions (phonological & semantic). Each one has its specific files.In put the same file in my verbal and visual loops mixing both conditions... quite simple but I didnt think it would have worked with"exit_if" statement: exit if [condition] = 'verbal'in the visual condition and vice versa