Pairwise comparison in RM ANOVA with a between-subject factor in JASP

Hello,

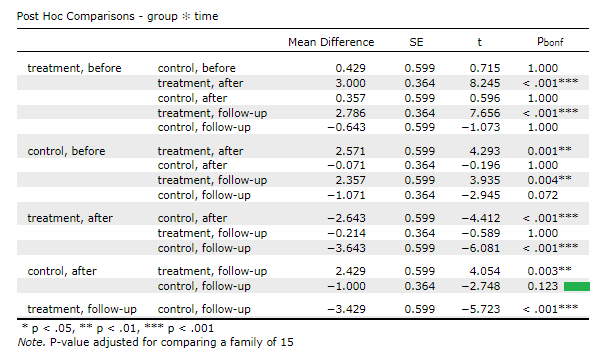

I have one repeated measure factor (3 times) and one between-subject factor (2 groups). I used Repeated measure ANOVA in JASP. All the main and interaction effects were significant and now I want to do pairwise comparison between all factors. In the "Post Hoc Tests", I moved the interaction (group * time) to the right box and it gave me a pairwise comparison. This is the results:

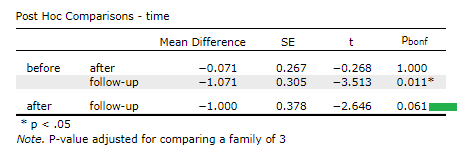

Another way, I excluded one of the groups (treatment) and did a simple repeated measure ANOVA only for the control group. This is the results of the pairwise comparisons:

I had two question and was wondering if you could guide me:

- does the first method make sense to report the pairwise between and within group comparisons?

- why the results of the second method (when the the treatment group was excluded) are not identical to corresponding values in the first method? For example, the P value of control_after vs control_follow-up (marked with green line) in the first method is 0.123, while this is 0.061 in the second method.

I'm looking forward to hearing from you and any help is greatly appreciated.

(JASP 0.18.3)

Regards,

-Mohammadreza

Comments

This is entirely normal, and that happens for at least two reasons:

Regarding the after-versus-follow-up comparison in the control condition: Another reason the two ANOVAs produce different post-hoc results is that error term (i.e., variance(s)) for each comparison is estimated from the entire data set--not just from the particular conditions being tested in the t test. Thus, if you exclude some of the data (such as by excluding the treatment group), the variance estimates will be different, thus the t values will be different, and thus the p values will be different--even if there is no Bonferroni correction.

R

Dear @patc3 and @andersony3k,

Thanks a lot for your response. Now I understand what is going on, and this is not what I intend to do. I think the second method would be a better choice.

Shouldn't there be a checkbox for "Pool error term for Between-subject factors" similar to this for RM factor?

Actually, I think there's a legitimate question concerning the unpooling of error-terms.

In an ANOVA, there's an assumption of equal error variances. Likewise, for repeated-measures, there's an assumption that the variance of the difference scores is the same for each pair of repeated-measures levels (this is part of the sphericity assumption). However, if one uses unpooled error terms in the post-hoc tests, there is no equal-error-variance assumption, thus producing an inconsistency between the ANOVA and the post-hocs: the former is assuming equal variances while the latter (unpooled post-hocs) is not.

In the past, I've advocated that there be an option to conduct post-hoc tests in which no variances are pooled (not even for non-repeated-measures factors), with the understanding that it's not really part of any ANOVA (it's just a bunch of t tests, optionally corrected for repeated testing). I think that if you want to do this you can just use a bunch of filter-created variables and do an individual t test on each comparison you want to make, rather than rely on a "post-hocs" routine.

R

@andersony3k

I see your point and I appreciate your help.

I was hoping that JASP gives me all the pairwise comparisons at the same time 🙃.

JASP will give you all of the pairwise comparisons at the same time, if you're willing to accept what many people regard as the correct approach, which is to always use pooled variances.

R

Thanks again, you are right. I agree with the pooled variance for RM factors.

I'm not expert; I've seen the group-split post-hoc in many papers (as SPSS doesn't have such post-hoc for the interaction effect), and personally, I prefer not to pool the variances of the other group when trying to do pairwise comparisons of RM factors (I couldn't find a convincing justification).

I think it would be great if JASP add this option to whether or not do post-hoc for each group separately, or to add this check box "Pool error term for Between-subject factors" rather than RM factors.